The Apache Kafka universe

at your fingertips

We believe that data streaming marks the beginning of a new era, so we're making it accessible to you and your colleagues. Kadeck is your powerful Desktop and Web UI for Apache Kafka that empowers Developers, Operations, Infrastructure, and Business teams across the Enterprise.

.png)

Command deck for data streaming

Kadeck is your team's command deck providing best-in-class tools to navigate your enterprise in the Apache Kafka universe. Develop and operate data applications, optimize Apache Kafka performance and make data streaming accessible.

Best-in-class data browser

Kadeck’s data browser delivers spreadsheet simplicity for complex real-time data streams. Sort, filter, and control all in one place.

AI Health Assistant

The Health Assistant is your AI-powered problem solver to achieve operational excellence. Minimize downtime, Maximize devops productivity.

“Handling thousands of messages per second is a breeze with Kadeck. It makes it very easy for our team to find, correct, and reprocess messages at any scale, and enhance our operational efficiency.”

FlowView visualizes data flows between Consumers, Producers, and Topics.

Consumers

Manage consumer groups and offsets and swiftly detect issues with health assistant integration.

Schema Registry

Effortlessly manage schemas and schema versions with the built-in schema editor and catalog.

Kafka Connect

Monitor and edit your connectors with auto-complete and parameter documentation.

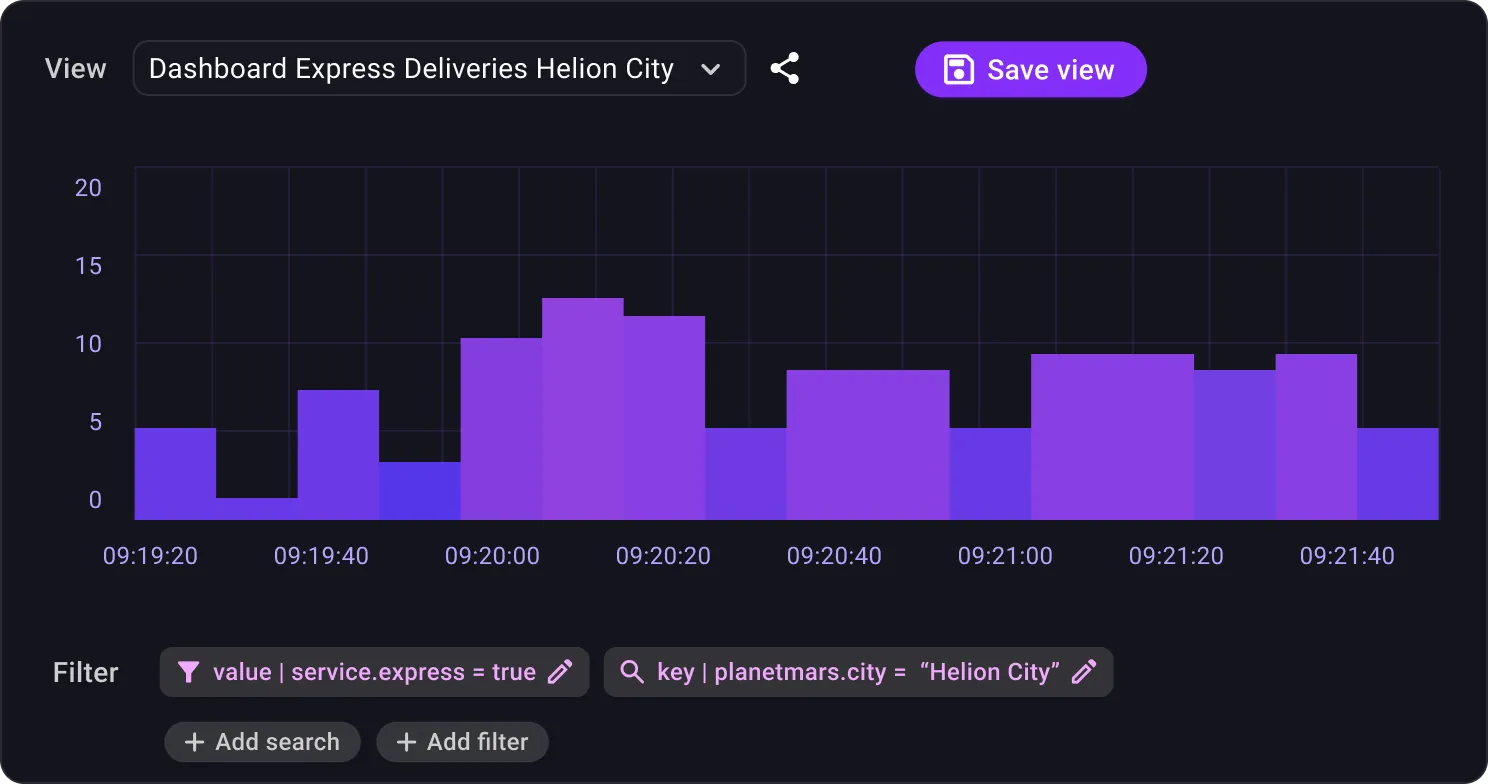

Data views

Create spreadsheet-like representations of data with JavaScript Transformations, Advanced Filtering, and Sorting. It’s easy, yet powerful.

Share & collaborate

Work with colleagues to fix data pipeline problems or create live spreadsheet-style views for business analysis.

Never outdated, always relevant API documentation

Create attractive topic documentation with always up-to-date schemas and metrics with no additional effort.

🚦 Mars Traffic notifications

This topic contains a real-time stream for all traffic-related notifications and updates from the integrated smart city infrastructure. This encompasses a wide array of data points and traffic events that significantly affect the city's traffic dynamics. Updates include real-time information about traffic jams, road closures, accidents, emergency situations, construction work, and detours. Other notifications include public transportation status, parking availability, and even congestion pricing alerts for high-traffic zones during peak hours.

.webp)

Data Catalog. Effortlessly organize thousands of topics with labels and data owners across all clusters for ultimate overview.

Great security supports productivity

Don’t compromise security for speed. We’ve built security into every aspect of Kadeck to ensure highly secure streaming environments and data applications. No slow down required.

"In the Apache Kafka projects of our clients, one often can't see the forest for the trees due to the numerous topics. With Kadeck, we can set up a central data-streaming platform for IT and the business to keep track of everything - indispensable for developers, business, and operations!"

Join the Kadeck Universe

Experience the most powerful Apache Kafka monitoring platform.